That’s the question, and we really won’t know if our batteries last 30 years but could have made it 35, or if we added 5 years to them. I guess nobody really knows.

What I do know is I’m going against Victron’s LifPo profile, LiTime’s settings, and going with this group’s advanced thinking approach.

If the batteries can be charged to 3.5V (14V) no matter what charge rate is being allowed, then I’ll set the chargers to 14V and let them run. Some days will get less charge and some days more, but I’m not going to babysit batteries every day.

If it is beneficial to charge them every 6 months until the BMS shuts them down, then I’ll do that also.

I’m not looking to change minds or offer suggestions, I’m looking for someone to tell me how to set my solar chargers and let them work.

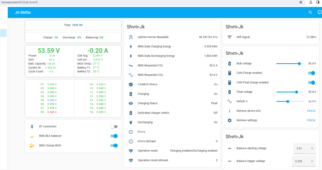

I’ll see if I can attach a snap of my solar settings, and the history graph of what these settings do. I get a big hockey stick when the charge voltage gets above 13.6. It climbs rapidly to 14V or 14.2V, whatever I have set.

I can relate to what you're saying in this thread, and I also use a Victron controller. If you don't have perfect knowledge then there is no perfect way, only acceptable compromises.

For example, unless your charge controller reads a shunt at your battery, the charge controller can not know the current flowing into your battery. It only knows the total of the inverter current plus the charge current. Like you have mentioned, it can randomly be a high or a low inverter current, and that will mess with the current taper cutoff in the controller. Another issue is that the Victron doesn't have voltage sense cables. It measures the cell voltage at its battery terminals. So unless you get an external sense dongle, it will only be accurate at low currents.

To make sense of this myself, here is how I deal with it: A battery getting slightly undercharged is no big deal. I seriously doubt it has any long term effect. Some say LFP has memory effects, but I've seen very little evidence of it. However, overcharging LFP on a daily basis probably degrades the battery, so I try to stay away from it.

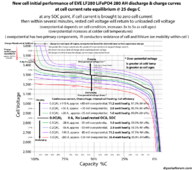

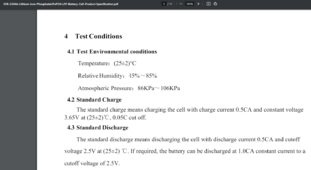

The only bulk voltage you can be 100% sure will not overcharge your battery is the float voltage, 3.37 V. But of course that'll not be practical. The charging will take forever. So instead you use 3.45 V to 3.55 V and adjust your absorption time and current cutoff so that you get to 95-97% SOC in most common scenarios. You look at typical inverter current, typical controller current, and check the charge diagrams (a good one included below). You configure the controller to hit the desired SOC. Then you live with the fact that once in a blue moon it will be over 100%. Also keep in mind that LFP cells are not kittens. They are designed to be durable and tough, to be used in EVs.

Or you just ignore all of the theory as pointless navel gazing, and just do what Victron tells you. I suspect that they know more about this than all of us together.